Body

Patients who have experienced acquired or genetic brain injury and are unable to speak or communicate clearly often seek augmented assistance to restore ease of conversation.

We are changing and enhancing the uses and studies of brain machine interfacing BMI with patients who have limited language capability. Normally a patient would spell out words, and the interfacing would decode particular signals, from response stimulus and this takes extraordinary amounts of patience and time, during which the communication is hindered and impeded with frustration and delays.

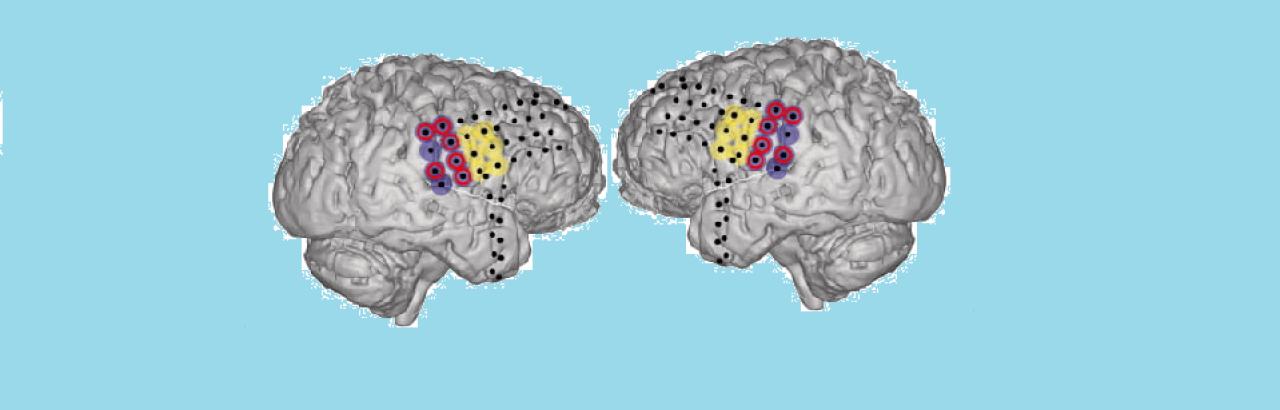

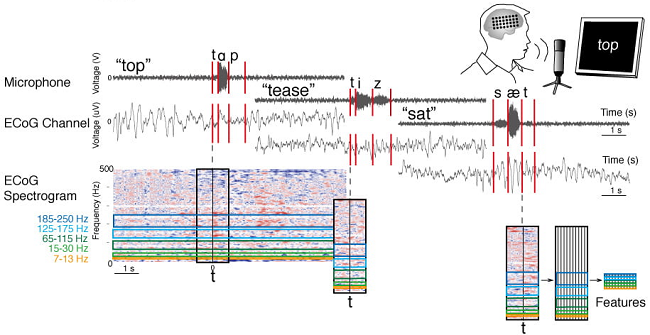

Our lab is changing this with predictions in speech patterns that decode the patient's intended speech from speech motor cortical signals. The BMI decodes entire words and sentences, with minimal delays, much the same as a smartphone does in texting. Words in language are composed of grouped sounds and phonemes. Signals recorded from the surface of the motor cortex contain information about phonemes based on the location of phoneme production. We are learning that we can decode the entire range of American English ponemes from motor cortex using subdural field potentials (Mugler et al., J Neural Eng. 2014) and are searching out how the brain controls speech production. We have great hopes to use this for many fields and conditions for better augmented ability.

Back to SNL Home page